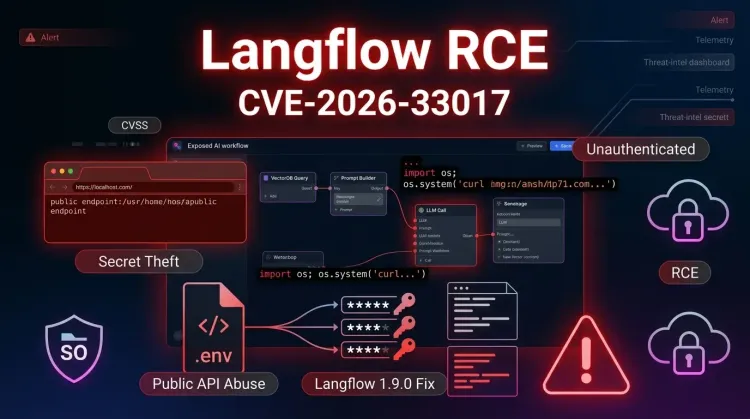

CVE-2026-33017 is a critical Langflow flaw that turns a public-flow convenience feature into unauthenticated remote code execution. The issue affects versions before 1.9.0 and abuses the POST /api/v1/build_public_tmp/{flow_id}/flow endpoint, where attacker-supplied flow data can reach exec() with no sandboxing. For teams experimenting with AI agents in cloud environments, that means a single exposed workflow can become an entry point for code execution, secret theft, and fast follow-on compromise.

The urgency is not theoretical. GitHub disclosed the issue on March 17, 2026, and Sysdig says it observed the first exploitation attempts roughly 20 hours later. The attackers they tracked were not just checking whether a target was vulnerable. They progressed from validation to environment-variable dumping, .env discovery, database hunting, and outbound exfiltration. In practice, that makes CVE-2026-33017 less like a narrow developer bug and more like an Internet-facing initial-access problem for AI workflow stacks.

Why CVE-2026-33017 matters

Langflow is used to build AI workflows, chat experiences, and agent pipelines that often hold high-value integrations. These deployments commonly sit next to model provider keys, database strings, internal APIs, and orchestration logic. Once an attacker gets code execution, the next step is rarely just local damage. It is usually credential theft, lateral movement, and access expansion into other systems.

The vulnerable behavior is straightforward:

- Langflow exposes a public-flow build endpoint intended to work without authentication.

- That endpoint accepts an optional

dataparameter containing attacker-controlled flow content. - Node definitions inside that payload can contain arbitrary Python code.

- Langflow processes the supplied flow data server-side and reaches

exec()without sandboxing. - The attacker gains unauthenticated code execution on the Langflow host.

The NVD entry confirms the issue is fixed in Langflow 1.9.0. GitHub’s advisory also makes an important distinction: this is different from the older Langflow RCE path addressed under CVE-2025-3248. In other words, organizations that already learned one Langflow lesson should not assume the platform’s exposed surfaces are now low-risk by default.

What researchers observed after disclosure

Sysdig’s write-up is the clearest operational signal so far. According to its telemetry, attackers began exploiting the issue within about 20 hours of publication, even before a public GitHub proof-of-concept was widely available. That matters because it shows the advisory itself was detailed enough for threat actors to reverse into a working exploit quickly.

The activity Sysdig described followed a familiar progression:

- initial validation using simple

idexecution and callback infrastructure - automated scanning consistent with private nuclei-style templates

- custom exploitation focused on file discovery and credential hunting

envdumping and.envextraction to recover secrets- stage-two delivery attempts and outbound exfiltration

That sequence fits a broader pattern defenders keep seeing around exposed application flaws: a vulnerability becomes dangerous not only because of initial code execution, but because the compromised service already has access to secrets and trusted systems. In Langflow’s case, those trusted systems can include LLM provider keys, vector stores, databases, and internal automation backends.

Timeline from disclosure to active abuse

| Date (UTC) | Event | Status |

|---|---|---|

| March 17, 2026 | GitHub advisory for GHSA-vwmf-pq79-vjvx is published | 📢 Public disclosure |

| March 17, 2026 | NVD and downstream advisory databases index CVE-2026-33017 | 🔍 Reference coverage |

| March 18, 2026 | Sysdig observes first exploitation attempts around 20 hours later | 🔴 Active exploitation |

| March 18-19, 2026 | Attackers move from validation into secret harvesting and stage-two delivery | ⚠️ Post-exploitation |

| Langflow 1.9.0 | Vendor fix available for vulnerable versions prior to 1.9.0 | ✅ Patch available |

Why exposed AI workflow stacks are high-value targets

The security lesson here is bigger than a single CVE. AI workflow platforms compress data access, external integrations, and automation privileges into one place. That gives defenders speed, but it also gives attackers a concentrated blast radius when something goes wrong.

For many teams, Langflow may have access to:

- OpenAI, Anthropic, or other model-provider API keys

- database credentials and connection URIs

- internal service tokens

- prompt assets and business logic

- connectors into ticketing, storage, or messaging systems

Once an attacker lands on a vulnerable instance, they may be able to read secrets, modify workflows, establish persistence, and push further into the environment. From a defender’s perspective, this is why network segmentation, secret minimization, and incident response planning matter just as much as patching.

Immediate defensive actions

1. Upgrade Langflow to 1.9.0 or later

This is the primary fix. If an Internet-facing instance is still running a version earlier than 1.9.0, treat it as urgent.

2. Review whether public flows are actually needed

If public flows are enabled for convenience, demos, or testing, now is the time to remove that exposure. Public build surfaces for agent workflows deserve the same skepticism as any other public execution path.

3. Hunt for signs of secret access and outbound callbacks

Look for:

- unexpected execution of

id,env,find,cat,curl, orwget - reads of

.env, SQLite, or config files - outbound requests to attacker-controlled callback domains or unfamiliar IPs

- sudden workflow modifications or unexplained new flows

4. Rotate secrets that may have been reachable from the Langflow host

If the service stored provider tokens, database passwords, or cloud credentials, assume those may have been exposed if compromise is suspected. This is where vulnerability management and credential hygiene meet reality.

5. Reduce blast radius around the service

Isolate Langflow from assets it does not need to reach. Limit outbound access where possible, reduce token privileges, and monitor for suspicious process execution from the application runtime.

Strategic takeaway

CVE-2026-33017 is a reminder that AI application stacks are now part of the mainstream attack surface. A public workflow endpoint that feels harmless during development can quickly become a route to code execution, secret theft, and broader infrastructure exposure once the service moves into real environments.

For security teams, the right response is not only “patch Langflow.” It is also to treat agent builders and workflow orchestration platforms as privileged systems. If they can touch your data, models, or credentials, they deserve the same hardening, visibility, and containment you would expect around any other high-trust application.

Bottom line

Patch exposed Langflow instances immediately, review public-flow exposure, and assume reachable secrets are in scope if compromise is suspected.

Key takeaways

✅ The flaw is unauthenticated and low-friction to exploit — a public-flow build request can reach arbitrary Python execution.

✅ Attackers moved quickly after disclosure — exploitation was observed within roughly 20 hours, with secret-hunting behavior soon after.

✅ The real risk is downstream access — API keys, databases, and connected automations can turn one app flaw into broader compromise.

If Langflow is Internet-facing in your environment, upgrading to 1.9.0 should be treated as an urgent operational task, not routine maintenance.