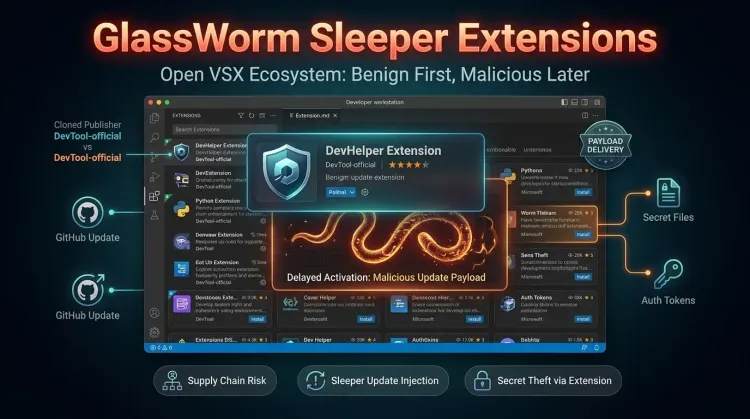

The newest GlassWorm wave matters because it turns the normal extension update path into a delayed-delivery channel for malware. Instead of shipping obviously hostile code on day one, attackers publish cloned Open VSX extensions that look legitimate enough to win trust, then activate some of them later with loaders that fetch or install second-stage payloads.

That change makes this more dangerous than a routine malicious package story. Traditional triage often focuses on whether an extension looks suspicious at install time. Socket's latest research shows that this assumption is breaking down. A sleeper extension can appear benign, pass a shallow review, gather installs, and only later shift into a malicious update that targets developer workstations, local secrets, and editor trust relationships.

What happened

Socket says it is tracking 73 impersonation extensions tied to the latest GlassWorm activity in Open VSX. At least six have already been activated to deliver malicious behavior, while the rest are considered high-confidence sleepers or otherwise suspicious.

The campaign uses cloned listings that mimic legitimate extensions through copied icons, similar names, reused descriptions, and matching README content. The point is not sophisticated exploit development. It is social engineering at the marketplace layer, where a busy developer may notice the logo and name but miss the publisher namespace.

According to Socket, the delivery methods now span several patterns:

- thin-loader extensions that fetch a second-stage VSIX package from GitHub at runtime

- extensions that load platform-specific native

.nodebinaries and invoke installation logic locally - obfuscated JavaScript variants that decode payload URLs at runtime and then install malicious extensions across supported editors

BleepingComputer's coverage reinforces the same point: the extension itself may no longer contain the most important malicious logic. In some cases, it is just the installer or relay.

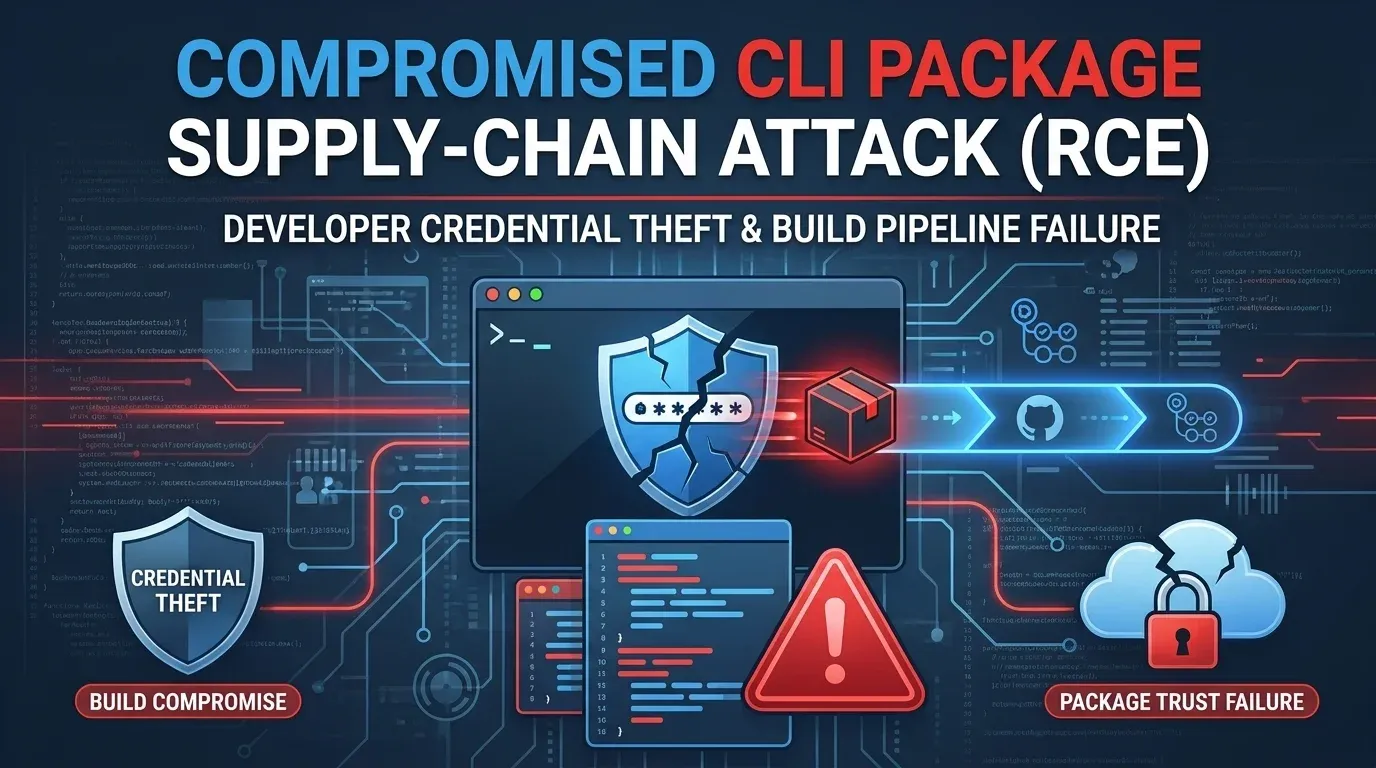

Why the sleeper pattern changes the defender problem

This is a software supply-chain issue, but it is also a trust-lifecycle issue.

Defenders are used to checking whether a package or extension is malicious at the moment it is introduced. GlassWorm is leaning into the gap between first install and later update. That creates at least four practical problems for engineering teams:

- initial review becomes less reliable because the first version may be intentionally quiet

- cloned branding makes publisher validation more important than visual familiarity

- later updates can bypass the psychological caution developers apply only during first install

- rollback and containment get harder when the malicious payload is split across GitHub-hosted artifacts, native binaries, and multi-editor install routines

This is especially relevant in environments where extensions can touch terminals, workspaces, local files, credentials, SSH material, cloud tokens, and developer tooling. A single compromised editor path can quickly become a broader secrets-exposure event.

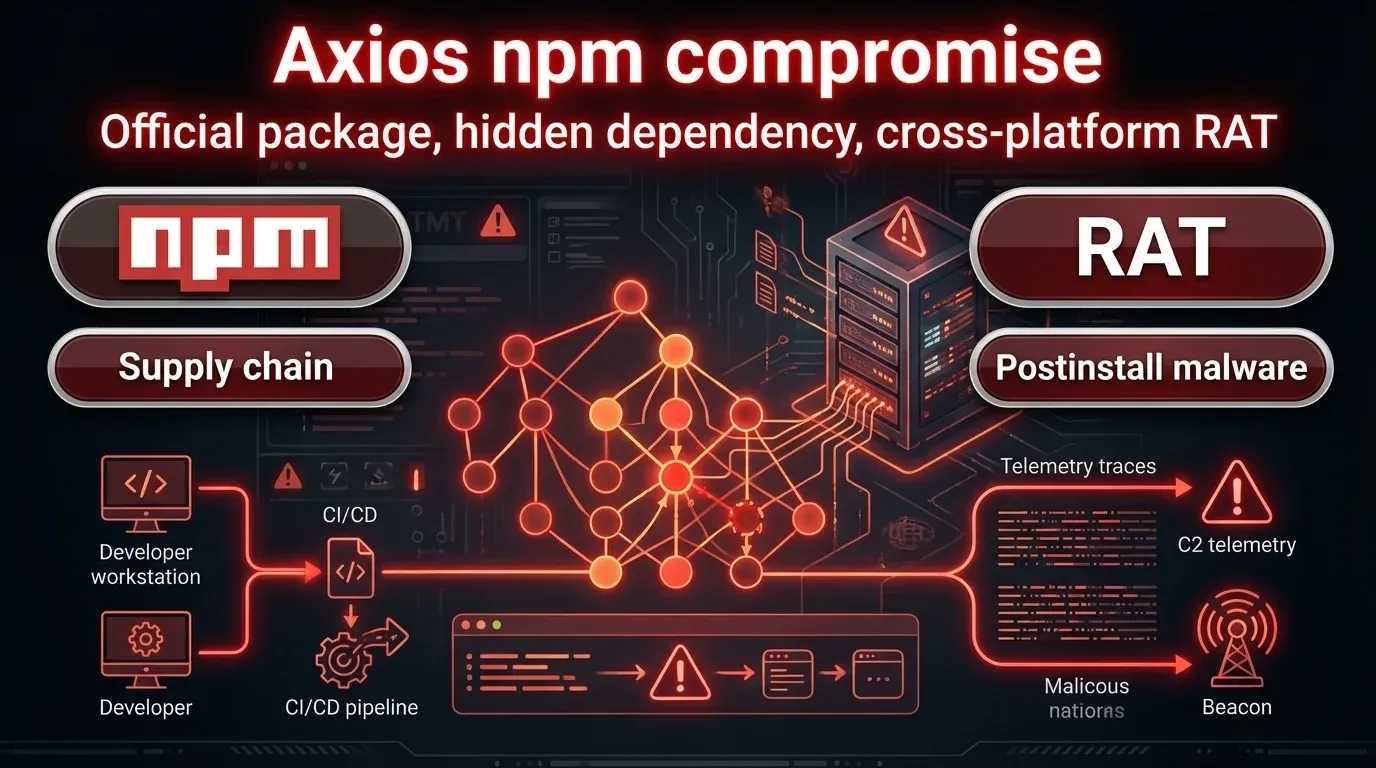

What the campaign is after

Socket says previous GlassWorm activity targeted cryptocurrency wallets, credentials, SSH keys, access tokens, and developer-environment data. The new wave keeps the same operational logic even if the exact payload differs between samples: gain trust first, then use the update path to compromise the workstation and harvest valuable material.

That matters because engineering endpoints frequently hold the keys to much more than a single laptop. They can provide access to source code, CI/CD pipelines, package registries, internal infrastructure, and cloud administration paths. In other words, an extension compromise can become a broader access control and secrets-management incident.

What defenders should do now

1. Hunt for the listed extensions immediately

Compare installed Open VSX extensions against Socket's published indicators and its GlassWorm v2 tracking page. Do not rely on a visual scan alone. Validate the publisher, exact identifier, and install history.

2. Treat suspicious installs as a credential event, not just a cleanup task

If any flagged extension was present, assume developer secrets may be exposed. Rotate SSH keys, cloud credentials, personal access tokens, CI secrets, and any credentials reachable from the affected workstation.

3. Review extension update trust rules

If your environment allows broad self-service extension installation or silent updates from low-trust publishers, this is the moment to tighten that model. Restrict approved marketplaces and define allowlists for critical engineering roles.

4. Audit for secondary payload execution

Look for unexpected VSIX downloads, suspicious GitHub release fetches, unusual editor CLI activity such as --install-extension, and native .node modules loaded from extension directories.

5. Reassess workstation-to-production trust paths

If developers can reach production systems, registries, or CI platforms from the same endpoints where these extensions ran, widen the incident scope. The real risk is not just local infection. It is downstream access.

Strategic takeaway

GlassWorm shows that extension ecosystems are maturing into full supply-chain attack surfaces. The novelty here is not just another fake listing. It is the operational patience: publish first, weaponize later, and let normal update behavior do the rest.

That means defenders need to think beyond one-time package review. Marketplace trust, publisher verification, extension update policy, and developer secret hygiene now sit on the same risk path.

What is GlassWorm doing differently in this wave?

It is using sleeper Open VSX extensions that appear benign when first published, then later update into loaders or installers that deliver malicious payloads.

Why is that more dangerous than a normal malicious extension?

Because the initial version may not look obviously hostile, which weakens first-install review and lets attackers weaponize trust later through standard update behavior.

Who is most at risk?

Developers and engineering teams using Open VSX-compatible editors, especially where extension installs, updates, and access to sensitive local or cloud secrets are loosely controlled.

What should teams do first?

Check for listed extensions, remove anything suspicious, rotate developer-access secrets, and review extension trust and update controls.